Digital sound

Categories: computer music sound synthesis

When we store sound digitally, we convert it to a sequence of sample values (rather than treating it as a continuous wave line we do with analogue sound.

To better understand how digital sound works, we will look at how we might capture digital sound from an analogue microphone, and then play it back through speakers. Then we will look at digital synthesis of sound.

Recording sound

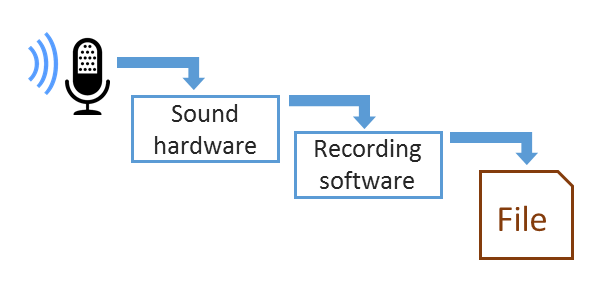

When you record a sound from a microphone your computer or other device, it goes through the following stages:

The sound hardware takes the signal from the microphone and converts it to digital form. The recording software takes the digital sound information, and stores it to disk as a sound file.

Sound hardware

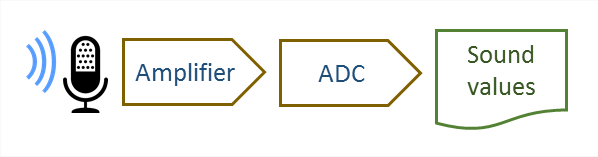

The sound hardware consists of an amplifier and an analogue to digital converter (ADC)

Most devices have built in recording hardware, although with a PC you can use a separate sound card to obtain higher quality.

If you use a USB microphone, the conversion hardware is included in the microphone itself, and the sound is digitised before it is sent over the USB connection. Your computer sound hardware will be bypassed in that case.

Amplifier

The signal from most microphones is very weak, so it is amplified before it is digitised. This creates an electrical signal which represents the sound waveform.

Analogue to Digital Converter

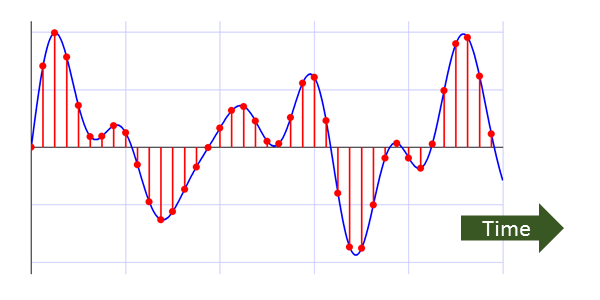

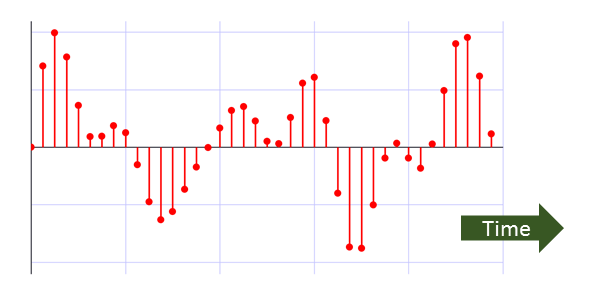

The electrical signal is called a continuous signal, because its value changes all the time. To create a digital sound we must measure the value at certain pints in time. So instead of having a signal that changes continuously in time, we have a series of "snapshots" of the value at particular points in time. This process is called sampling. Typically we would sample thousands of times per second, for example the digital sound on a CD is samples

The electrical signal also has a continuous value - the voltage can be any value (within the minimum and maximum values allowed). When we digitise the signal, we only allow a fixed set of values. For example:

Here the input signal (voltage) can have any value between 0.0 and 1.0. When we sample the signal, we only allow a certain number different levels, in this case 8. We pick the closest level to the actual value - it might not be exactly right, but it is a close approximation. This is called quantisation.

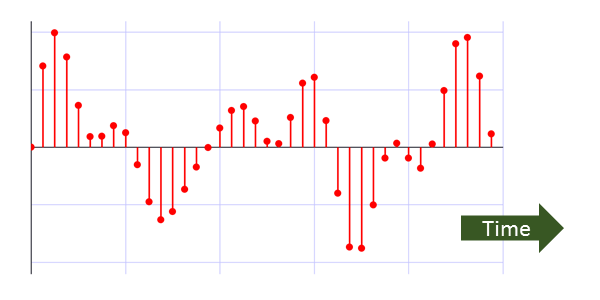

If we use 8 levels, it means that each value can be stored as a 3 bit quantity. In fact we would never use 8 levels, the quality would be very poor (8 is just used to make the diagram simpler). Usually we use 65536 levels, so each sample takes 16 bits, or 2 bytes.

Sound recording software

The sound hardware produces sound data as raw, unformatted data in memory. The job of the sound recording software is to control the process (staring and stopping the recording), and to write the data to disk in a standard format such as WAV or MP3.

Playing back digital sound

When you play a sound file from your computer or other device, through speakers or headphones, it goes through the following stages. These are more or less the opposite the stages in recording a sound:

The player software reads the sound data from file, and sends the data to the hardware, which converts it to an electrical signal, which in turn drives the speaker.

Sound player software

The sound player software reads the sound data from a sound file in a standard format such as WAV or MP3. It uncompresses the data, if necessary, and passes it to the sound hardware.

Sound hardware

The sound hardware consists of a digital to analogue converter (DAC) and an amplifier

Most devices have built in sound hardware, although with a PC you can use a separate sound card to obtain higher quality.

If you use USB headphones, the conversion hardware is built in to the headphones. The sound is is sent over the USB connection in digital form, and converted to an electrical signal by the headphones. Your computer sound hardware will be bypassed in that case.

Digital to Analogue Converter

The sound file stores the waveform as a list of numbers which represent the sound level.

As an example, our sound file might use the number 0 to 7 to represent a signal level of 0.0 to 1.0. The DAC takes the numbers and converts them back into an electrical signal:

Although the electrical signal is continuous (it can take any value within the range), the output of the DAC can only take values which match the quantised levels of the input data. However, the sound system filters the output to produce a smoother curve (in green).

Amplifier

The output of the DAC must be amplified to provide enough power to create a sound. Most devices such as phones or laptops only supply enough power to drive a set of headphones. If you want to use loudspeakers, you will need an additional amplifier. For example, the speakers you might use with a PC often have their own built-in amplifier.

The output signal will not be exactly the same as the input signal because it has been sampled and quantised, both of which distort the signal. But the distortion shown here is deliberately exaggerated to illustrate the effect. The signal is typically sampled tens of thousands of times per second, and quantised to tens of thousands of levels, creating very good quality sound.

Digital synthesis

Digital synthesis works in a completely different way to analogue synthesis.

Generally it works by calculating the values of the sound at each point in time, using software. This has the advantage that the values and timings are mathematically exact. It also means that synthesis can be changed and extended without the need to alter the hardware, provided the processor is basically powerful enough to perform the calculations in real time.

See also

Sign up to the Creative Coding Newletter

Join my newsletter to receive occasional emails when new content is added, using the form below:

Popular tags

555 timer abstract data type abstraction addition algorithm and gate array ascii ascii85 base32 base64 battery binary binary encoding binary search bit block cipher block padding byte canvas colour coming soon computer music condition cryptographic attacks cryptography decomposition decryption deduplication dictionary attack encryption file server flash memory hard drive hashing hexadecimal hmac html image insertion sort ip address key derivation lamp linear search list mac mac address mesh network message authentication code music nand gate network storage none nor gate not gate op-amp or gate pixel private key python quantisation queue raid ram relational operator resources rgb rom search sort sound synthesis ssd star network supercollider svg switch symmetric encryption truth table turtle graphics yenc